Dockerfiles and Docker Images explained

Posted on October 26, 2023 • 6 minutes • 1275 words

Table of contents

Container technologies have fundamentally transformed the way we package and ship applications. The team behind Docker, were one of the pioneers of the container movement, and also one of the first to provide a standardised and portable environment through its Docker images.

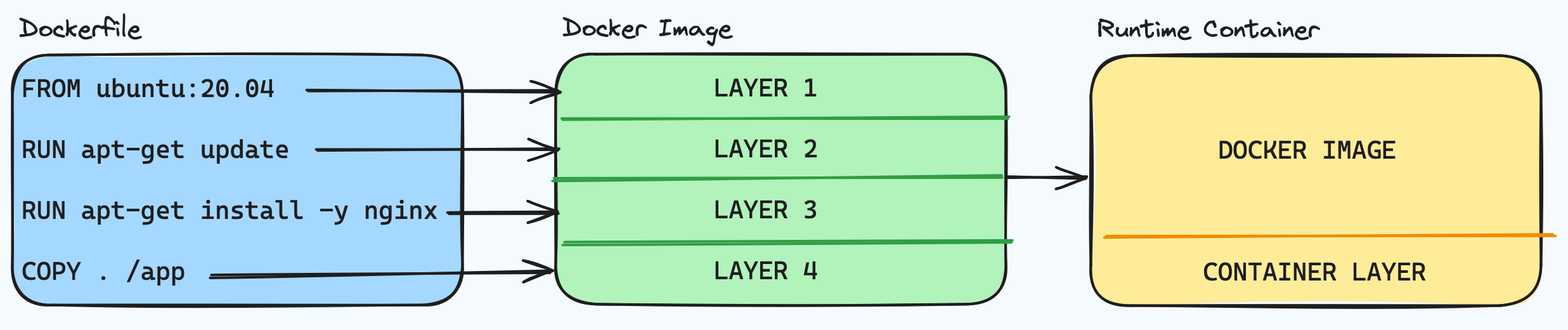

In this article, we’ll explore the relationship between the Dockerfile and the Docker image, investigating how each instruction/command in the Dockerfile contributes to the creation of Docker image layers.

The Dockerfile

Some like to think of a Dockerfile as a sort of shell script, but it’s more than that; it’s a blueprint that builds-up a step-by-step construction of a Docker image. Let’s take a closer look into the key elements that build up a Dockerfile, and investigate some of the key reasons Docker has become a key piece of software for many organisations today.

Base Images

The Dockerfile begins with a base image, setting up the operating system and/or runtime environment. This choice significantly influences the characteristics and capabilities of the final Docker image. This is unless of course, you’re creating a multi-stage build, where your build-time container is using a different base image to your runtime container. You can read about some of the advantages of multi-stage builds in my other article Optimising Dockerfiles .

Instructions

Each line that begins in Docker syntax in the Dockerfile represents an instruction, which is akin to a command. These instructions include installing dependencies, copying files, setting environment variables, defining runtime configurations and so on.

For example, consider this simple Dockerfile:

1FROM ubuntu:22.04

2COPY . /app

3RUN make /app

4CMD python /app/app.py

Notice that each line begins with a Docker command, then continues with what looks like a shell command. In some cases, it is a shell command, but Docker must first know what to do with that command, and this is why we have Docker syntax.

Although Docker commands are case-insensitive, it’s a widely used convention to have them in uppercase, so they’re easy to distinguish between them and your commands which appear as arguments to the Docker commands. If you’re familiar with bash -c, Docker is essentially replicating the same behaviour with its Docker syntax: “run the following as a script”.

For example, let’s break down what’s going on:

Docker Syntax |

Your Command (arguments) |

What's happening |

|---|---|---|

FROM |

ubuntu:22.04 |

Creates a layer from the ubuntu:22.04 Docker image from the default registry (docker hub) |

COPY |

. /app |

Adds files from your Docker client’s current directory . to the directory /app |

RUN |

make /app |

Builds your application using the make utility |

CMD |

python /app/app.py |

Specifies what to do to run the container |

🔖 You can view a complete list of Dockerfile syntax commands in the official documentation .

Layered Construction

So Docker images are built-up in a layered fashion. Every instruction in the Dockerfile results in the creation of an intermediate layer, forming a layered filesystem.

Layering enables efficient caching and incremental builds. If a change occurs, only the affected layers and subsequent layers need to be rebuilt, significantly speeding up development work-cycles.

Another perspective

Consider another simple Dockerfile, and this time think of each line as a layer, rather than a command:

1FROM ubuntu:20.04

2RUN apt-get update

3RUN apt-get install -y nginx

4COPY . /app

The FROM instruction initializes the image with a base layer of Ubuntu 20.04.

Subsequent instructions add layers by updating the package list, installing Nginx, and copying local files into the image.

This layered approach enables a granular understanding of the image’s composition, and it’s easier to diagnose issues along the way.

Docker Images

A Docker image is the end result of executing the instructions listed within a Dockerfile. It serves as a standalone, executable package, which contains app code, runtime, libraries, and dependencies. This makes them portable & lightweight.

Here’s a diagram on how the Docker image relates to the Dockerfile:

When an image layer is successfully written (because the command in the Dockerfile was successful) the layer becomes immutable. If a layer is not successfully written (because the command resulted in a non-zero exit status) the layer is not written and the build fails and no image is produced.

When running an instance of the final image, (such as when using the docker run command) a new read-write layer is appended so that the image can run as a container; processes can run, volumes are able to be mounted, and so on. This read-write layer is also known as the container layer.

Layered like a Cake 🍰

This layered structure ensures efficiency in terms of storage, caching, and incremental builds, emphasising the modularity of the Docker image. This makes debugging of builds easier, as it’s possible to identify the exact location - or layer - of a problem in a build and experiment from there instead of building the whole thing repeatedly.

For example, say you’re investigating a Docker build failure on layer 6 which was previously successfully building. One possible way you could begin your investigation, would be to temporarily remove the problem layer and all subsequent layers from the Dockerfile and run the build again. The build should be nice and fast because of layer caching more on caching in the next article

and you should be able to use the docker exec command to execute into the container to have a poke around. Now you could manually run the command which fails to see if you’re able to replicate the issue and resolve it. Once you have your solution, you can modify the Dockerfile with your fix and readd the subsequent commands back in again.

This can help shorten your development cycle and allows you experiment incrementally, and is especially useful in long, complex builds.

Consistency & Portability

Dockerfiles ensure consistent reproducibility by defining the environment and dependencies that an app or process requires. This guarantees that an environment can be recreated on totally different physical systems and a standardised development and deployment process. You can also share and store them in Infrastructure as Code platforms, as they’re just text files.

The layered structure of Docker images, combined with compression technologies, contributes to their compact size and ease of distribution. When an image is sent or received from an image registry, such as Docker Hub or Google Artifact Registry etc, the layers of the Dockerfile and Docker image come back in to play once again. You’ll see this when you’re pulling an image down from a registry. Notice that Docker doesn’t download the image in one piece, but it downloads the layers of the image and extracts them one by one, recreating the image on your local machine.

Docker uses a technology called a Union File System to compress the layers of Docker images. The Union File System is a way of combining multiple file systems into a single view, allowing files and directories from different file systems to be overlaid on top of each other. This technology is crucial for Docker’s layered image approach. The Union File System is a bit out of scope for this article, but if you’re interested in learning more about the topic, take a look at this great blog article how-docker-images-work-union-file-systems by Eli Uriegos.

Wrap Up

Understanding the link between a Dockerfile and a Docker image is extremely useful to Engineers when creating efficient container builds. Dockerfiles provide a clear recipe for constructing images, ensuring reproducibility, modularity, and portability.

In the next article , we’ll take a look at how we can use this knowledge to optimise our Dockerfiles and Docker images, and make the best use of layer caching while keeping the image sizes as small as possible.